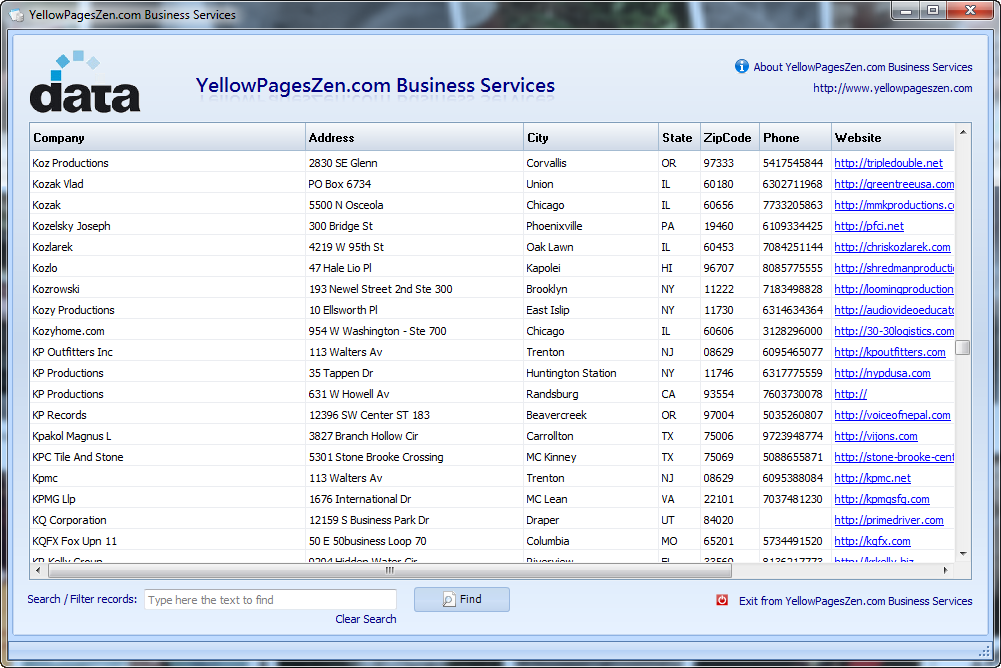

We can scrape a single search page so now all we have to do is wrap this logic in a scraping loop: async def search(query: str, session: httpx.AsyncClient, location: Optional = None) -> List: Print(json.dumps(result_search, indent=2)) # to run our scraper we need to start httpx session:Īsync with httpx.AsyncClient(limits=limits, timeout=httpx.Timeout(15.0), headers=BASE_HEADERS) as session: "accept": "text/html,application/xhtml+xml,application/xml q=0.9,image/webp,image/apng,*/* q=0.8", # to avoid being instantly blocked we should use request headers that of a common web browser: Let's run our scraper and see the results it generates: Run code & example output import asyncio In each iteration, we use relative CSS selectors to collect business preview information such as phone number, rating, name and most importantly, link to their full information page. In the above code, we first isolate each result by its bounding box and iterate through each 30 of the result boxes: search page parsing markup "categories": many(".categories>a::text"), """parse search page for business preview data"""įor result in sel.css(".organic div.result"):įirst = lambda css: result.css(css).get("").strip() This object just helps us to keep track what results we'll be getting""" """Type hint container for business preview data. search?search_terms=Japanese+Restaurants&geo_location_terms=San+Francisco,%2C+CA.įor this, we'll be using parsel package with a few CSS selectors: import asyncioįrom urllib.parse import urlencode, urljoin Let's take a look at how we can scrape this efficiently.įirst, let's take a look at scraping a single search page like japanese restaurants in San Francisco, California: "Japanese Restaurant"), location and the page number. We can see that it takes in a few key parameters: query (e.g. To scrape YellowPages search we'll be forming search URL from given parameters and then iterating through multiple page URLs to collect all business listings. We can see that when we submit a search request YellowPages takes us to a new url containing pages of results. 1× once we click find we are redirected to results page with 427 results As for, parsel, another great alternative is beautifulsoup package or anything that supports CSS selectors which is what we'll be using in this tutorial.

These packages can be easily installed via pip command: $ pip install httpx parsel loguruĪlternatively, feel free to swap httpx out with any other HTTP client package such as requests as we'll only need basic HTTP functions which are almost interchangeable in every library. Optionally we'll also use loguru - a pretty logging library that'll help us keep track of what's going on. In this tutorial, we'll stick with CSS selectors as YellowPages HTML's are quite simple.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed